Q-Learning introduction and Q Table - Reinforcement Learning w/ Python Tutorial p.1

Welcome to a reinforcement learning tutorial. In this part, we're going to focus on Q-Learning.

Q-Learning is a model-free form of machine learning, in the sense that the AI "agent" does not need to know or have a model of the environment that it will be in. The same algorithm can be used across a variety of environments.

For a given environment, everything is broken down into "states" and "actions." The states are observations and samplings that we pull from the environment, and the actions are the choices the agent has made based on the observation. For the purposes of the rest of this tutorial, we'll use the context of our environment to exemplify how this works.

While our agent doesn't actually need to know anything about our environment, it would be somewhat useful for you to understand how it works in the context of learning how Q-learning works!

We're going to be working with OpenAI's gym, specifically with the "MountainCar-v0" environment. To get gym, just do a pip install gym.

Okay, now let's check out this environment. Most of these basic gym environments are very much the same in the way they work. To intialize the environment, you do a gym.make(NAME), then you env.reset the environment, then you enter into a loop where you do an env.step(ACTION) every iteration. Let's poke around this environment:

import gym

env = gym.make("MountainCar-v0")

print(env.action_space.n)

For the various environments, we can query them for how many actions/moves are possible. In this case, there are "3" actions we can pass. This means, when we step the environment, we can pass a 0, 1, or 2 as our "action" for each step. Each time we do this, the environment will return to us the new state, a reward, whether or not the environment is done/complete, and then any extra info that some envs might have.

It doesnt matter to our model, but, for your understanding, a 0 means push left, 1 is stay still, and 2 means push right. We wont tell our model any of this, and that's the power of Q learning. This information is basically irrelevant to it. All the model needs to know is what the options for actions are, and what the reward of performing a chain of those actions would be given a state. Continuing along:

import gym

env = gym.make("MountainCar-v0")

env.reset()

done = False

while not done:

action = 2 # always go right!

env.step(action)

env.render()

As you can see, despite asking this car to go right constantly, we can see that it just doesn't quite have the power to make it. Instead, we need to actually build momentum here to reach that flag. To do that, we'd want to move back and forth to build up momentum. We could program a function to do this task for us, or we can use Q-learning to solve it!

How will Q-learning do that? So we know we can take 3 actions at any given time. That's our "action space." Now, we need our "observation space." In the case of this gym environment, the observations are returned from resets and steps. For example:

import gym

env = gym.make("MountainCar-v0")

print(env.reset())

Will give you something like [-0.4826636 0. ], which is the starting observation state. While the environment runs, we can also get this information:

import gym

env = gym.make("MountainCar-v0")

state = env.reset()

done = False

while not done:

action = 2

new_state, reward, done, _ = env.step(action)

print(reward, new_state)

At each step, we get the new state, the reward, whether or not the environment is done (either we beat it or exhausted our limit of 200 steps), and then a final "extra info" is returned, but, in this environment, this final return item is not used. Gym throws it in there so we can use the same reinforcement learning programs across a variety of environments without the need to actually change any of the code.

Output from the above:

-1.0 [-0.26919024 -0.00052001] -1.0 [-0.27043839 -0.00124815] -1.0 [-0.2724079 -0.00196951] -1.0 [-0.27508804 -0.00268013] -1.0 [-0.27846408 -0.00337604] -1.0 [-0.28251734 -0.00405326] -1.0 [-0.28722515 -0.00470781] -1.0 [-0.29256087 -0.00533573] -1.0 [-0.29849394 -0.00593307]

Okay so we can see the reward is just simply -1 always so far. Then we see the observation is yet again these 2 values.

And again, to our agent, what these values are...really doesn't matter. But, for your curiosity, the values are position (along an x/horizontal axis) and velocity. So, we can see above that the car was moving left, for example, because velocity is negative.

With a general position, and a velocity, we could *definitely* come up with some sort of algorithm that could calculate whether or not we'd make it to the flag, or if we should instead reverse again to build more momentum, so we hope Q learning can do the same. These 2 values are our "observation space." This space can be of any size, but, the larger it gets, the much larger the Q Table becomes!

What's a Q Table!?

The way Q-Learning works is there's a "Q" value per action possible per state. This creates a table. In order to figure out all of the possible states, we can either query the environment (if it is kind enough to us to tell us)...or we just simply have to engage in the environment for a while to figure it out.

In our case, we can query the enviornment to find out the possible ranges for each of these state values:

print(env.observation_space.high) print(env.observation_space.low)

[0.6 0.07] [-1.2 -0.07]

For the value at index 0, we can see the high value is 0.6, the low is -1.2, and then for the value at index 1, the high is 0.07, and the low is -0.07. Okay, so these are the ranges, but from one of the above observation states that we output: [-0.27508804 -0.00268013], we can see that these numbers can become quite granular. Can you imagine the size of a Q Table if we were going to have a value for every combination of these ranges out to 8 decimal places? That'd be huge! And, more importantly, it'd be useless. We don't need that much granularity. So, instead, what we want to do is conver these continuous values to discrete values. Basically, we want to bucket/group the ranges into something more manageable.

We'll use 20 groups/buckets for each range. This is a variable you might decide to tweak later.

DISCRETE_OS_SIZE = [20, 20] discrete_os_win_size = (env.observation_space.high - env.observation_space.low)/DISCRETE_OS_SIZE print(discrete_os_win_size)

[0.09 0.007]

So this tells us how large each bucket is, basically how much to increment the range by for each bucket. We can build our q_table now with:

import numpy as np ... q_table = np.random.uniform(low=-2, high=0, size=(DISCRETE_OS_SIZE + [env.action_space.n]))

So, this is a 20x20x3 shape, which has initialized random Q values for us. The 20 x 20 bit is every combination of the bucket slices of all possible states. The x3 bit is for every possible action we could take.

As you can likely already see...even this simple environment has a pretty large table. We have a value for every possible state!

So these values are random, and the choice to be between -2 and 0 is also a variable. Each step is a -1 reward, and the flag is a 0 reward, so it seems to make sense to make the starting point of random Q values all negative.

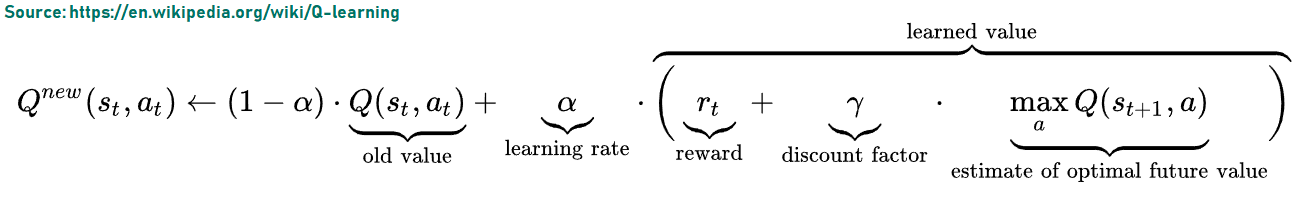

This table is our bible. We will consult with this table to determine our moves. That final x3 is our 3 actions and each of those 3 actions have the "Q value" associated with them. When we're being "greedy" and trying to "exploit" our environment, we will choose to go with the action that has the highest Q value for this state. Sometimes, however, especially initially, we may instead wish to "explore" and just choose a random action. These random actions are how our model will learn better moves over time. So how do we learn over time? We need to update these Q values! How do we update those Q values?

Easy!

...Which is what we'll be talking about in the next tutorial!

-

Q-Learning introduction and Q Table - Reinforcement Learning w/ Python Tutorial p.1

-

Q Algorithm and Agent (Q-Learning) - Reinforcement Learning w/ Python Tutorial p.2

-

Q-Learning Analysis - Reinforcement Learning w/ Python Tutorial p.3

-

Q-Learning In Our Own Custom Environment - Reinforcement Learning w/ Python Tutorial p.4

-

Deep Q Learning and Deep Q Networks (DQN) Intro and Agent - Reinforcement Learning w/ Python Tutorial p.5

-

Training Deep Q Learning and Deep Q Networks (DQN) Intro and Agent - Reinforcement Learning w/ Python Tutorial p.6